Sidecar injection, transparent traffic hijacking, and routing process in Istio explained in detail

Based on Istio version 1.13, this article will present the following:

- What is the sidecar pattern and what advantages does it have?

- How are the sidecar injections done in Istio?

- How does the sidecar proxy do transparent traffic hijacking?

- How is the traffic routed upstream?

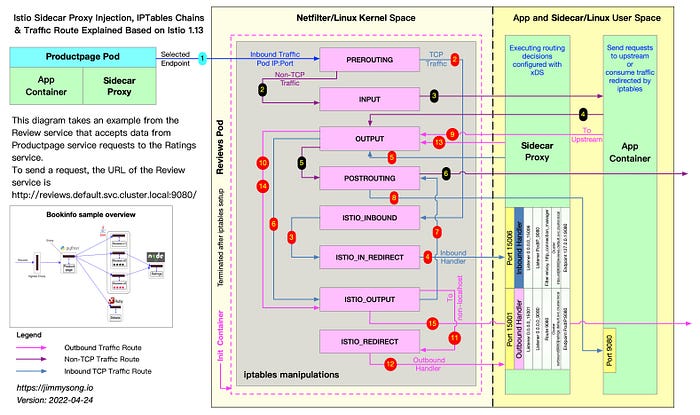

The figure below shows how the productpage service requests access to http://reviews.default.svc.cluster.local:9080/ and how the sidecar proxy inside the reviews service does traffic blocking and routing forwarding when traffic goes inside the reviews service.

At the beginning of the first step, the sidecar in the productpage pod has selected a pod of the reviews service to be requested via EDS, knows its IP address, and sends a TCP connection request.

There are three versions of the reviews service, each with an instance, and the sidecar work steps in the three versions are similar, as illustrated below only by the sidecar traffic forwarding step in one of the Pods.

There’s a Chinese version of this blog: 阅读中文版

Sidecar pattern

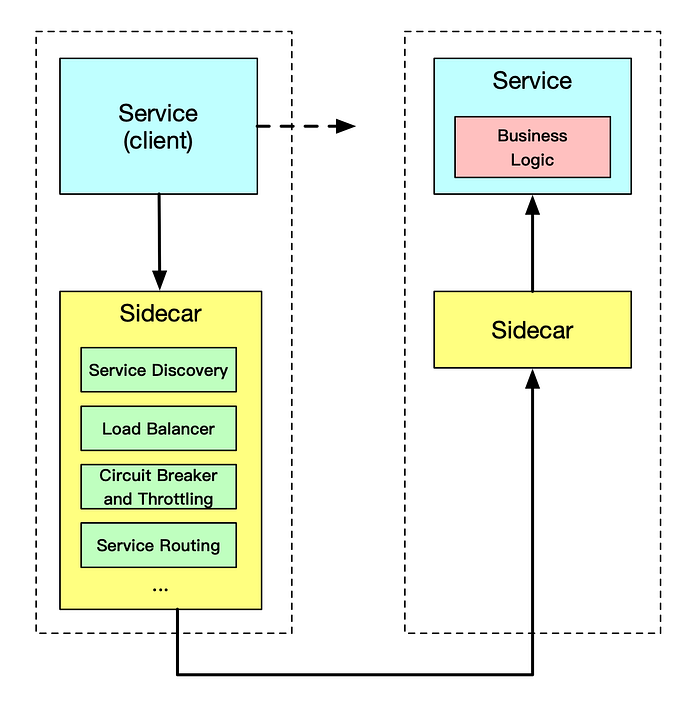

Dividing the functionality of an application into separate processes running in the same minimal scheduling unit (e.g. Pod in Kubernetes) can be considered sidecar mode. As shown in the figure below, the Sidecar pattern allows you to add more features next to your application without additional third-party component configuration or modifications to the application code.

The Sidecar application is loosely coupled to the main application. It can shield the differences between different programming languages and unify the functions of microservices such as observability, monitoring, logging, configuration, circuit breaker, etc.

Advantages of using the Sidecar pattern

When deploying a service mesh using the sidecar model, there is no need to run an agent on the node, but multiple copies of the same sidecar will run in the cluster. In the sidecar deployment model, a companion container (such as Envoy or MOSN) is deployed next to each application’s container, which is called a sidecar container. The sidecar takes overall traffic in and out of the application container. In Kubernetes’ Pod, a sidecar container is injected next to the original application container, and the two containers share storage, networking, and other resources.

Due to its unique deployment architecture, the sidecar model offers the following advantages.

- Abstracting functions unrelated to application business logic into a common infrastructure reduces the complexity of microservice code.

- Reduce code duplication in microservices architectures because it is no longer necessary to write the same third-party component profiles and code.

- The sidecar can be independently upgraded to reduce the coupling of application code to the underlying platform.

Sidecar injection in Istio

The following two sidecar injection methods are available in Istio.

Whether injected manually or automatically, SIDECAR’s injection process follows the following steps.

- Kubernetes needs to know the Istio cluster to which the sidecar to be injected is connected and its configuration.

- Kubernetes needs to know the configuration of the sidecar container itself to be injected, such as the image address, boot parameters, etc.

- Kubernetes injects the above configuration into the side of the application container by the sidecar injection template and the configuration parameters of the above configuration-filled sidecar.

The sidecar can be injected manually using the following command.

This command is injected using Istio’s built-in sidecar configuration, see the Istio official website for details on how to use Istio below.

When the injection is complete you will see that Istio has injected initContainer and sidecar proxy-related configurations into the original pod template.

Init container

The Init container is a dedicated container that runs before the application container is launched and is used to contain some utilities or installation scripts that do not exist in the application image.

Multiple Init containers can be specified in a Pod, and if more than one is specified, the Init containers will run sequentially. The next Init container can only be run if the previous Init container must run successfully. Kubernetes only initializes the Pod and runs the application container when all the Init containers have been run.

The Init container uses Linux Namespace, so it has a different view of the file system than the application container. As a result, they can have access to Secret in a way that application containers cannot.

During Pod startup, the Init container starts sequentially after the network and data volumes are initialized. Each container must be successfully exited before the next container can be started. If exiting due to an error will result in a container startup failure, it will retry according to the policy specified in the Pod’s restartPolicy. However, if the Pod’s restartPolicy is set to Always, the restartPolicy is used when the Init container failed.

The Pod will not become Ready until all Init containers are successful. The ports of the Init containers will not be aggregated in the Service. The Pod that is being initialized is in the Pending state but should set the Initializing state to true. The Init container will automatically terminate once it is run.

Sidecar injection example analysis

For a detailed YAML configuration for the bookinfo applications, see bookinfo.yaml for the official Istio YAML of productpage in the bookinfo sample.

The following will be explained in the following terms.

- Injection of Sidecar containers

- Creation of iptables rules

- The detailed process of routing

Let’s see the productpage container's Dockerfile.

We see that ENTRYPOINT is not configured in Dockerfile, so CMD's configuration python productpage.py 9080 will be the default ENTRYPOINT, keep that in mind and look at the configuration after the sidecar injection.

$ istioctl kube-inject -f samples/bookinfo/platform/kube/bookinfo.yamlWe intercept only a portion of the YAML configuration that is part of the Deployment configuration associated with the productpage.

Istio’s configuration for application Pod injection mainly includes:

- Init container

istio-init: for setting iptables port forwarding in the pod - Sidecar container

istio-proxy: running a sidecar proxy, such as Envoy or MOSN

The two containers will be parsed separately.

Init container analysis

The Init container that Istio injects into the pod is named istio-init, and we see in the YAML file above after Istio’s injection is complete that the init command for this container is.

Let’s check the container’s Dockerfile again to see how ENTRYPOINT determines what commands are executed at startup.

We see that the entrypoint of the istio-init container is the /usr/local/bin/istio-iptables command line and the location of the code for this command-line tool is in the tools/istio-iptables directory of the Istio source code repository.

Init container initiation

The Init container’s entrypoint is the istio-iptables command line, which is used as follows.

The above incoming parameters are reassembled into iptables rules. For more information on how to use this command, visit tools/istio-iptables/pkg/cmd/root.go.

The significance of the container’s existence is that it allows the sidecar agent to intercept all inbound and outbound traffic to the pod, redirect all inbound traffic to port 15006 (sidecar) except port 15090 (used by Prometheus) and port 15092 (Ingress Gateway), and then intercept outbound traffic from the application container which is processed by sidecar (listening through port 15001) and then outbound. See the official Istio documentation for port usage in Istio.

Command analysis

Here is the purpose of this start-up command.

- Forward all traffic from the application container to port 15006 of the sidecar.

- Run with the istio-proxy user identity, with a UID of 1337, the userspace where the sidecar is located, which is the default user used by the istio-proxy container, see the runAsUser field of the YAML configuration.

- Use the default REDIRECT mode to redirect traffic.

- Redirect all outbound traffic to the sidecar proxy (via port 15001).

Because the Init container is automatically terminated after initialization, since we cannot log into the container to view the iptables information, the Init container initialization results are retained in the application container and sidecar container.

iptables manipulation analysis

In order to view the iptables configuration, we need to nsente r the sidecar container using the root user to view it, because kubectl cannot use privileged mode to remotely manipulate the docker container, so we need to log on to the host where the productpage pod is located.

If you use Kubernetes deployed by minikube, you can log directly into the minikube’s virtual machine and switch to root. View the iptables configuration that lists all the rules for the NAT (Network Address Translation) table because the mode for redirecting inbound traffic to the sidecar is REDIRECT in the parameters passed to the istio-iptables when the Init container is selected for the startup, so there will only be NAT table specifications in the iptables and mangle table configurations if TPROXY is selected. See the iptables command for detailed usage.

We only look at the iptables rules related to productpage below.

View the process’s iptables rule chain under its namespace.

The focus here is on the 9 rules in the ISTIO_OUTPUT chain. For ease of reading, I will show some of the above rules in the form of a table as follows.

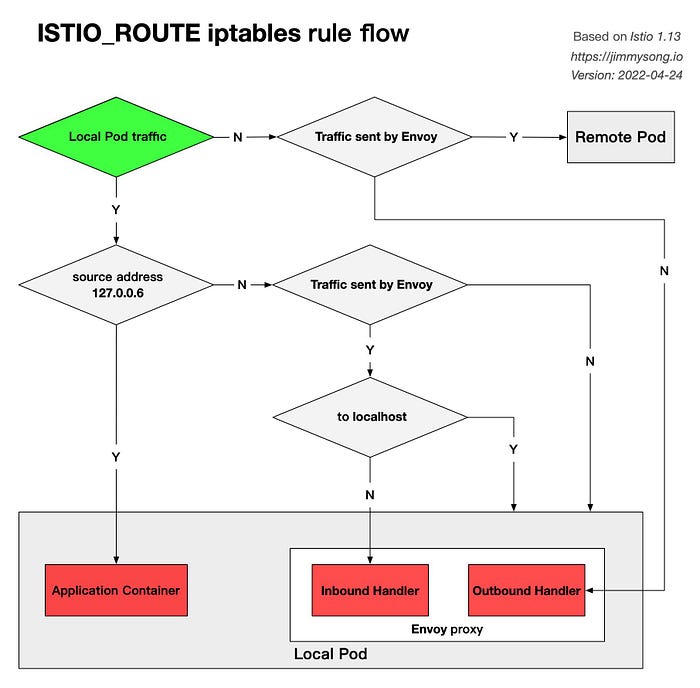

The following diagram shows the detailed flow of the ISTIO_ROUTE rule.

I will explain the purpose of each rule, corresponding to the steps and details in the illustration at the beginning of the article, in the order in which they appear. Where rules 5, 6, and 7 are extensions of the application of rules 2, 3, and 4 respectively (from UID to GID), which serve similar purposes and will be explained together. Note that the rules therein are executed in order, meaning that the rule with the next highest order will be used as the default. When the outbound NIC (out) is lo (local loopback address, loopback interface), it means that the destination of the traffic is the local Pod, and traffic sent from the Pod to the outside, will not go through this interface. Only rules 4, 7, 8, and 9 apply to all outbound traffic from the review Pod.

Rule 1

- Purpose: To pass through traffic sent by the Envoy proxy to the local application container, so that it bypasses the Envoy proxy and goes directly to the application container.

- Corresponds to steps 6 through 7 in the diagram.

- Details: This rule causes all requests from 127.0.0.6 (this IP address will be explained below) to jump out of the chain, return to the point of invocation of iptables (i.e. OUTPUT) and continue with the rest of the routing rules, i.e. the

POSTROUTINGrule, which sends traffic to an arbitrary destination, such as the application container within the local Pod. Without this rule, traffic from the Envoy proxy within the Pod to the Pod container will execute the next rule, rule 2, and the traffic will enter the Inbound Handler again, creating a dead loop. Putting this rule in the first place can avoid the problem of traffic dead-ending in the Inbound Handler.

Rule 2, 5

- Purpose: Handle inbound traffic (traffic inside the Pod) from the Envoy proxy, but not requests to the localhost, and forward it to the Envoy proxy’s Inbound Handler via a subsequent rule.

- Corresponds to steps 6 through 7 in the diagram.

- Details: If the destination of the traffic is not localhost and the packet is sent by 1337 UID (i.e. istio-proxy user, Envoy proxy), the traffic will be forwarded to Envoy’s Inbound Handler through

ISTIO_IN_REDIRECTeventually.

Rule 3, 6

- Purpose: To pass through the inbound traffic of the application container within the Pod. Applies to traffic to the local Pod that is emitted in the application container.

- Details: If the traffic is not sent by an Envoy user, then jump out of the chain and return to

OUTPUTto callPOSTROUTINGand go straight to the destination.

Rule 4, 7

- Purpose: To pass through outbound requests sent by Envoy proxy.

- Corresponds to steps 14 through 15 in the illustration.

- Details: If the request was made by the Envoy proxy, return

OUTPUTto continue invoking thePOSTROUTINGrule and eventually access the destination directly.

Rule 8

- Purpose: Passes requests from within the Pod to the localhost.

- Details: If the destination of the request is localhost, return

OUTPUTand callPOSTROUTINGto access localhost directly.

Rule 9

The above rule avoids dead loops in the iptables rules for Envoy proxy to application routing, and guarantees that traffic can be routed correctly to the Envoy proxy, and that real outbound requests can be made.

About RETURN target

You may notice that there are many RETURN targets in the above rules, which means that when this rule is specified, it jumps out of the rule chain, returns to the call point of iptables (in our case OUTPUT), and continues to execute the rest of the routing rules, in our case the POSTROUTING rule, which sends traffic to any destination address, you can think of This is intuitively understood as pass-through.

About the 127.0.0.6 IP address

The IP 127.0.0.6 is the default InboundPassthroughClusterIpv4 in Istio and is specified in the code of Istio. This is the IP address to which traffic is bound after entering the Envoy proxy, and serves to allow Outbound traffic to be re-sent to the application container in the Pod, i.e. Passthought, bypassing the Outbound Handler. this traffic is access to the Pod itself, and not real outbound traffic. See Istio Issue-29603 for more information on why this IP was chosen as the traffic passthrough.

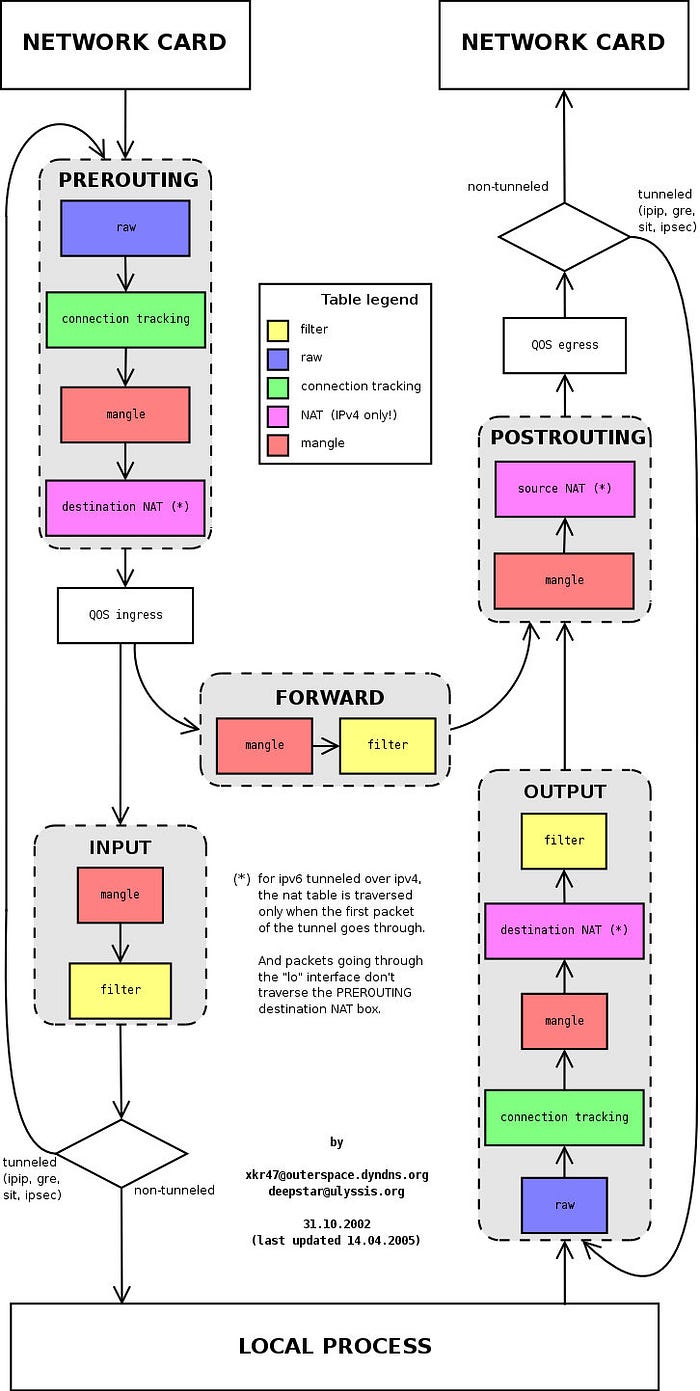

Understand iptables

iptables is a management tool for Netfilter, the firewall software in the Linux kernel. netfilter is located in the user space and is part of Netfilter. netfilter is located in the kernel space and has not only network address conversion, but also packet content modification and packet filtering firewall functions.

Before learning about iptables for Init container initialization, let’s go over iptables and rule configuration.

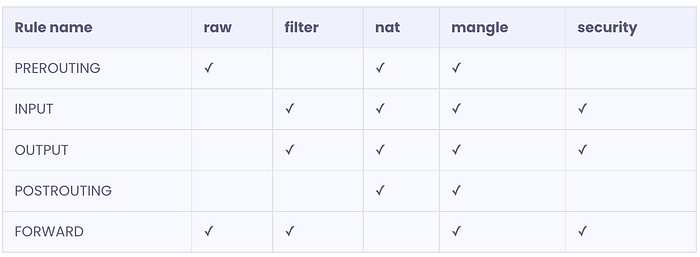

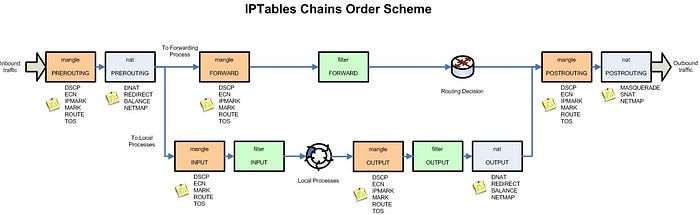

The following figure shows the iptables call chain.

iptables

The iptables version used in the Init container is v1.6.0 and contains 5 tables.

- RAW is used to configure packets. Packets in RAW are not tracked by the system.

- The filter is the default table used to house all firewall-related operations.

- NAT is used for network address translation (e.g., port forwarding).

- Mangle is used for modifications to specific packets (refer to corrupted packets).

- Security is used to force access to control network rules.

Note: In this example, only the NAT table is used.

The chain types in the different tables are as follows.

Understand iptables rules

View the default iptables rules in the istio-proxy container, the default view is the rules in the filter table.

We see three default chains, INPUT, FORWARD, and OUTPUT, with the first line of output in each chain indicating the chain name (INPUT/FORWARD/OUTPUT in this case), followed by the default policy (ACCEPT).

The following is a proposed structure diagram of iptables, where traffic passes through the INPUT chain and then enters the upper protocol stack, such as:

Multiple rules can be added to each chain and the rules are executed in order from front to back. Let’s look at the table header definition of the rule.

- PKTS: Number of matched messages processed

- bytes: cumulative packet size processed (bytes)

- Target: If the message matches the rule, the specified target is executed.

- PROT: Protocols such as TDP, UDP, ICMP, and ALL.

- opt: Rarely used, this column is used to display IP options.

- IN: Inbound network interface.

- OUT: Outbound network interface.

- source: the source IP address or subnet of the traffic, the latter being anywhere.

- destination: the destination IP address or subnet of the traffic, or anywhere.

There is also a column without a header, shown at the end, which represents the options of the rule, and is used as an extended match condition for the rule to complement the configuration in the previous columns. prot, opt, in, out, source and destination and the column without a header shown after destination together form the match rule. TARGET is executed when traffic matches these rules.

Types supported by TARGET

Target types include ACCEPT, REJECT, DROP, LOG, SNAT, MASQUERADE, DNAT, REDIRECT, RETURN or jump to other rules, etc. You can determine where the telegram is going by executing only one rule in a chain that matches in order, except for the RETURN type, which is similar to the return statement in programming languages, which returns to its call point and continues to execute the next rule.

From the output, you can see that the Init container does not create any rules in the default link of iptables, but instead creates a new link.

The traffic routing process explained

Traffic routing is divided into two processes, Inbound and Outbound, which will be analyzed in detail for the reader below based on the example above and the configuration of the sidecar.

Understand Inbound Handler

The role of the Inbound handler is to pass traffic from the downstream blocked by iptables to the localhost and establish a connection to the application container within the Pod. Assuming the name of one of the Pods is reviews-v1-545db77b95-jkgv2, run istioctl proxy-config listener reviews-v1-545db77b95-jkgv2 --port 15006 to see which Listener is in that Pod.

The following lists the meanings of the fields in the above output.

- ADDRESS: downstream address

- PORT: The port the Envoy listener is listening on

- MATCH: The transport protocol used by the request or the matching downstream address

- DESTINATION: Route destination

The Iptables in the reviews Pod hijack inbound traffic to port 15006, and from the above output we can see that Envoy’s Inbound Handler is listening on port 15006, and requests to port 9080 destined for any IP will be routed to the inbound|9080|| Cluster.

As you can see in the last two rows of the Pod’s Listener list, the Listener for 0.0.0.0:15006/TCP (whose actual name is virtualInbound) listens for all Inbound traffic, which contains matching rules, and traffic to port 9080 from any IP will be routed. If you want to see the detailed configuration of this Listener in Json format, you can execute the istioctl proxy-config listeners reviews-v1-545db77b95-jkgv2 --port 15006 -o json command. You will get an output similar to the following.

Since the Inbound Handler traffic routes traffic from any address to this Pod port 9080 to the inbound|9080|| Cluster, let's run istioctl pc cluster reviews-v1-545db77b95-jkgv2 --port 9080 --direction inbound -o json to see the Cluster configuration and you will get something like the following output.

We see that the TYPE is ORIGINAL_DST, which sends the traffic to the original destination address (Pod IP), because the original destination address is the current Pod, you should also notice that the value of upstreamBindConfig.sourceAddress.address is rewritten to 127.0.0.6, and for Pod This echoes the first rule in the iptables ISTIO_OUTPUT the chain above, according to which traffic will be passed through to the application container inside the Pod.

Understand Outbound Handler

Because reviews send an HTTP request to the ratings service at http://ratings.default.svc.cluster.local:9080/, the role of the Outbound handler is to intercept traffic from the local application to which iptables has intercepted, and determine how to route it to the upstream via the sidecar.

Requests from application containers are Outbound traffic, hijacked by iptables and transferred to the Outbound handler for processing, which then passes through the virtualOutbound Listener, the 0.0.0.0_9080 Listener, and then finds the upstream cluster via Route 9080, which in turn finds the Endpoint via EDS to perform the routing action.

Route ratings.default.svc.cluster.local:9080

reviews requests the ratings service and runs istioctl proxy-config routes reviews-v1-545db77b95-jkgv2 --name 9080 -o json. View the route configuration because the sidecar matches VirtualHost based on domains in the HTTP header, so only ratings.default.svc.cluster.local:9080 is listed below for this VirtualHost.

From this VirtualHost configuration, you can see routing traffic to the cluster outbound|9080||ratings.default.svc.cluster.local.

Endpoint outbound|9080||ratings.default.svc.cluster.local

Running istioctl proxy-config endpoint reviews-v1-545db77b95-jkgv2 --port 9080 -o json --cluster "outbound|9080||ratings.default.svc.cluster.local" to view the Endpoint configuration, the results are as follows.

We see that the endpoint address is 10.4.1.12. In fact, the Endpoint can be one or more, and the sidecar will select the appropriate Endpoint to route based on certain rules. At this point, the review Pod has found the Endpoint for its upstream service rating.

Summary

This article uses the bookinfo example provided by Istio to guide readers through the implementation details behind the sidecar injection, iptables transparent traffic hijacking, and traffic routing in the sidecar. The sidecar mode and traffic transparent hijacking are the features and basic functions of Istio service mesh, understanding the process behind this function and the implementation details will help you understand the principle of service mesh and the content in the later chapters of the Istio Handbook, so I hope readers can try it from scratch in their own environment to deepen their understanding.

Using iptables for traffic hijacking is just one of the ways to do traffic hijacking in the data plane of a service mesh, and there are many more traffic hijacking scenarios, quoted below from the description of the traffic hijacking section given in the MOSN official network of the cloud-native network proxy.

Problems with using iptables for traffic hijacking

Currently, Istio uses iptables for transparent hijacking and there are three main problems.

- The need to use the conntrack module for connection tracking, in the case of a large number of connections, will cause a large consumption and may cause the track table to be full, in order to avoid this problem, the industry has a practice of closing conntrack.

- iptables is a common module with global effect and cannot explicitly prohibit associated changes, which is less controllable.

- iptables redirect traffic is essentially exchanging data via a loopback. The outbound traffic will traverse the protocol stack twice and lose forwarding performance in a large concurrency scenario.

Several of the above problems are not present in all scenarios, let’s say some scenarios where the number of connections is not large and the NAT table is not used, iptables is a simple solution that meets the requirements. In order to adapt to a wider range of scenarios, transparent hijacking needs to address all three of these issues.

Transparent hijacking optimization

In order to optimize the performance of transparent traffic hijacking in Istio, the following solutions have been proposed by the industry.

Traffic Hijacking with eBPF using the Merbridge Open Source Project

Merbridge is a plug-in that leverages eBPF to accelerate the Istio service mesh, which was open-sourced by DaoCloud in early 2022. Using Merbridge can optimize network performance in the data plane to some extent.

Merbridge leverages the sockops and redir capabilities of eBPF to transfer packets directly from inbound sockets to outbound sockets. eBPF provides the bpf_msg_redirect_hash function to forward application packets directly.

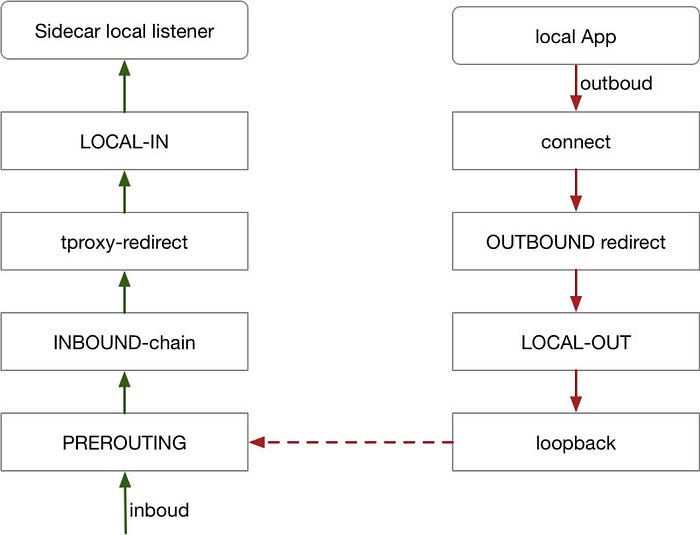

Handling inbound traffic with tproxy

tproxy can be used for redirection of inbound traffic without changing the destination IP/port in the packet, without performing connection tracking, and without the problem of conntrack modules creating a large number of connections. Restricted to the kernel version, tproxy’s application to outbound is flawed. Istio currently supports handling inbound traffic via tproxy.

Use hook connect to handle outbound traffic

In order to adapt to more application scenarios, the outbound direction is implemented by hook connect, which is implemented as follows.

Whichever transparent hijacking scheme is used, the problem of obtaining the real destination IP/port needs to be solved, using the iptables scheme through getsockopt, tproxy can read the destination address directly, by modifying the call interface, hook connect scheme reads in a similar way to tproxy.

After the transparent hijacking, the sockmap can shorten the packet traversal path and improve forwarding performance in the outbound direction, provided that the kernel version meets the requirements (4.16 and above).

References

Originally published at https://jimmysong.io.